It has been said that the worst liar is the one who lies to himself. Web stats makes this easy. So what are the ways that we can fool ourselves?

Are you sure the numbers are correct in the first place?

Often all we know about our site is a string of numbers magically produced from a black box. How close these numbers are to reality is anyone’s guess. Unfortunately too many people just take them at face value and hope against hope that they might be somewhere near the truth.

The only way that you can test a black box is if you know what the inputs are and then observe the outputs and see how they relate e.g if you select a test page 100 times does it show up in the stats as 100 page views? Unfortunately you can’t test everything so you will still probably end up with a string of numbers that have to be taken with a big pinch of salt. One of the big problems with web stats is that if an error does occur the figures might not be out by just a few percentage points, in fact they are just as likely to be out by several magnitudes. So what can we do? One way is to check the figures is by using another analytics tool such as Google Analytics but be careful as some errors inflating your web stats might just be replicated there anyway. Another way is to always keep an eye open for anomalies. Unique browsers just fallen by 33%? Always look for a fault in the web stats first. Whatever way you look at it web stats can be a minefield and web stats by themselves may not be always a good description of reality.

Interpreting the numbers incorrectly

Even if the numbers are correct are we sure we know what they mean? Take the following scenario –

A website has been trundling along with 50,000 unique browsers a day who, on average, look at three pages each visit. The site is due a redesign and the target is to not just attract attract more unique browsers but to also get them to view more pages during each visit. After the new website is launched although, disappointingly, unique browsers don’t change much but page views per user double to an average of six! That’s great, a partial success at least and the champagne is popped. Unfortunately some time later someone bothers to ask the users what they think and discover that the site navigation is terrible and that users are getting confused and looking at more pages trying to find content they could easily find before.

Not such a success after all. The problem with web stats is that, even when correct, they can tell you what’s happening but not why. Only your users can tell you that.

Using charts to lie

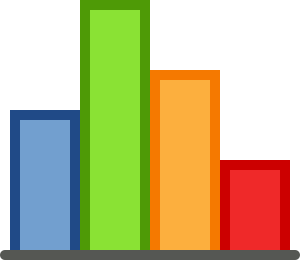

Look at the two charts below. One is trying to be honest while the other is an outright lie.

This one is the more honest one as the Y axis starts at zero and doesn’t skew our visual perception of the differences between the data points. It also shows the complete data set. We might read this as –

‘January was a good month and we’ve struggled a bit to get back where we were but it looks like the trend is going in the right direction.’

This one uses the same data but I have lied in two distinct ways. I’ve started the Y axis at 1400 meaning that the differences between the data points are exaggerated and I’ve only used the last six months data. If we look at this the only interpretation is –

‘A complete success with massive gains being made every month and everything is going in the right direction.‘

Or in other words a lie.

For some reason if data is nicely presented as a line or bar chart or a nice colourful pie people tend to believe what it’s saying. If we ‘spin’ our charts to make things look better than they really are all we are doing is dressing up a lie to look good.

Basing decisions on one data source…

…is a recipe for disaster. Some organisations base decisions on the magical string of numbers produced from a black box and then, when things fall apart, wonder how that could have possibly happened. Always, always look at all data sources, even if they tell conflicting stories (although this can tell you a lot too), and more especially if they are telling you something you don’t like. In my current job as a user analyst I have to be across at least five different data sources – web stats, complaints, emails, app reviews and social media. To be honest I’d like even more sources if I could get them. When the same outcomes can be seen across all user feedback and are also reflected in the web stats then this can provide evidence that is both compelling and robust , evidence that will carry real weight in the argument for improving your site. Failure to take full advantage in recording and analysing every possible source of data related to your users is perverse. Simply placing email links on your pages, carrying out short online surveys and finding ways of having face to face conversations with users is not rocket science. The one great advantage that intranet sites have over internets is that we know who our users are, they are all around us. Failure to take advantage of this fact is also perverse.

(The Intranet Health Check is one approach that shows how different data sources can be combined)

Indulge in wishful thinking (or trying to please the boss)

We’ve all heard the word ‘spin’. Its all about presenting facts in the best possible light, putting a gloss on things or, in other words, lying to people. We can, of course, lie to ourselves and this makes it easier to lie to our bosses. That is, in effect, what we’re doing if we don’t do our best to ensure we have multiple data sources that are objectively analysed. Being objective is really hard as we all want our product, our site to succeed but we have to try. One unfortunate aspect of being a the person who analyses user data is that we’re often the bearer of unwelcome news and we sometimes have to tell our teams that all that sweat, tears and creativity was more or less a waste of time. However the biggest waste of time and creativity is in not telling our teams the truth in a timely way.

I am very lucky in that the team I work for are honest and willing to accept the verdict of our users. They see this data as a pointer towards a better product and this makes my life much easier. If this is not the situation in your team then it is one that you must try and bring about. When I hold my regular catch up with my team I spend about half the time or less on the ‘positive’ findings that I do on the ‘negative’ findings. The reason for this is, if our priority is to improve our site for our users, then the real gold is in the ‘negative’ findings because that is where we’ll find our road map to a better site. So ‘negative’ news is really very positive if viewed in this way.

We all want to tell our teams nothing but good news but if we ‘spin’, dress up the truth or delude ourselves we let ourselves and our teams down. More than that we let our users down. Finding out what our users really think is hard, sometimes nearly impossible, but that doesn’t mean we shouldn’t try. We may never attain a complete picture of our users wants and needs, things change and change fast nowadays, but even being able to join some of the dots together will tell us a lot. 70% or 80% of the truth is a lot better than nothing, especially the nothing that we create when we won’t even admit to ourselves what is truth and what is lies.

Part 3 will be about how we can effectively analyse user comments.

Leave a comment